Alex_B

No longer a newbie, moving up!

- Joined

- Aug 30, 2006

- Messages

- 14,491

- Reaction score

- 206

- Location

- Europe 67.51°N

- Can others edit my Photos

- Photos NOT OK to edit

I do not undestand why Nikon and Canon do not use these, from reading about them they sound far superior to a CMOS or CCD. There is zero interpretation of color just a three layer sensor. Anyone have an explaination?

Of course there is "interpretation" of coulour, there always will be, since sensor pixels do not give an absolute and "true" colour. This is always done later when the electronic signal is translated into a brightness value for a colour. No difference here between three layered sensors and one-layered bayer pattern sensors. The latter need to interpolate the colour information between pixels with different colour sensitivity though...

As for why Canon and Nikon do not use those 3 layered sensors: Both implemented technologies that work and which they are optimising. Three layered sensors solve some issues but create new problems, I am sure.

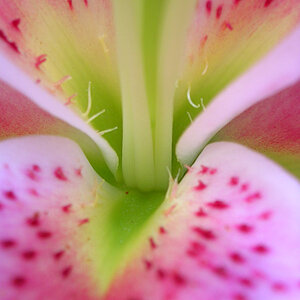

So do we have any real example where we can see that images from three layered sensors are really superior to one layered sensors? I agree in principle you should be able to get better colour sharpness/ colour resolution. But are these new sensors there yet?

Oh, and what would that mean to image quality in a world where a one layered CMOS or CCD is not the limiting factor anyway, but the lens is?

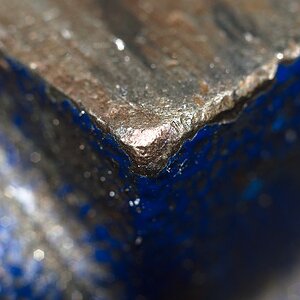

![[No title]](/data/xfmg/thumbnail/42/42056-76026251cb5ebb85b4a4d281d36121d8.jpg?1619739992)